Fast Algorithms and Integral Equation Methods (CS 598APK) Fall 2017

| What | Where |

|---|---|

| Time/place | Wed/Fri 2:00pm-3:15pm 1109 Siebel / Catalog |

| Class URL | https://bit.ly/fastalg-f17 |

| Class recordings | Echo 360 |

| Piazza | Discuss » |

| Calendar | View » |

Class Projects

Materials, reports, and presentations from the projects that students completed as part of this course are now available.

Homework

- Homework set 1

- Homework set 2

- Homework set 3

- Project Proposal

- Homework set 4

- Project Presentation Signup

(use

@illinois.eduto sign in) - Homework set 5

- Project Material Submission

Why you should take this class

Many of the algorithms of introductory scientific computing have super-linear runtime scaling. Gaussian elimination or LU decomposition are good examples, their runtime scales as $O(n^3)$ with the number of unknowns $n$. Even simple matrix-vector multiplication exhibits quadratic scaling. Problems in scientific computing, especially those arising from science and engineering questions, often demand large-scale computation in order to achieve acceptable fidelity, and such computations will not tolerate super-linear, let alone quadratic or cubic scaling.

This class will teach you a set of techniques and ideas that successfully reduce this asymptotic cost for an important set of operations. This leads to these techniques being called "fast algorithms". We will begin by examining some of these ideas from a linear-algebraic perspective, where for matrices with special structure, large cost gains can be achieved. We will then specialize to PDE boundary value problems, which give rise to many of the largest-scale computations. We will see that integral equations are the natural generalization of the linear-algebraic tools encountered earlier, and we will understand the mathematical and algorithmic foundations that make them powerful tools for computation. All throughout, we will pay much attention to the idea of representation–i.e. the choice of what the numerical unknowns of the system to be solved should be.

Instructor

Course Outline

I will insert links to class material, books, and papers into this tree as time goes on.

Note: the section headings in this tree are clickable to reveal more detail.

- Introduction

-

Dense Matrices and Computation

<li data-jstree='{"icon": "fa fa-paperclip"}'> <a href="http://arxiv.org/abs/0909.4061">Finding structure with randomness: Probabilistic algorithms for constructing approximate matrix decompositions by Halko/Martinsson/Tropp</a> </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Sources and Targets </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Point Interactions and Matrix Entries </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Rank and Complexity </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Numerical Rank </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Representation as Right Preconditioning </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Conditioning of Derivative Matrices.html">Demo: Conditioning of Derivative Matrices</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Conditioning of Derivative Matrices.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Conditioning of Derivative Matrices.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Conditioning of Derivative Matrices.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Floating point vs Finite Differences.html">Demo: Floating point vs Finite Differences</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Floating point vs Finite Differences.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Floating point vs Finite Differences.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Floating point vs Finite Differences.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Rank of a Potential Evaluation Matrix.html">Demo: Rank of a Potential Evaluation Matrix</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Rank of a Potential Evaluation Matrix.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Rank of a Potential Evaluation Matrix.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/Rank of a Potential Evaluation Matrix.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-file-text-o"}'> <a href="repocur:demos/upload/01-dense-matrices-and-computation/m_echelon.py">Code: m_echelon.py</a> </li> </ul> -

Tools for Low-Rank Matrices

<li data-jstree='{"icon": "fa fa-file-o"}'> Randomized Low-Rank Approximation </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Randomized SVD </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Finding an Orthogonal Basis for the Range of a Matrix </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Rank-Revealing QR </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Interpolative Decomposition </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Interpolative Decomposition.html">Demo: Interpolative Decomposition</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Interpolative Decomposition.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Interpolative Decomposition.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Interpolative Decomposition.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Randomized SVD.html">Demo: Randomized SVD</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Randomized SVD.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Randomized SVD.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Randomized SVD.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Rank-Revealing QR.html">Demo: Rank-Revealing QR</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Rank-Revealing QR.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Rank-Revealing QR.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/02-tools-for-low-rank/Rank-Revealing QR.ipynb">Download Jupyter notebook</a> </li> </ul> </li> </ul> -

Rank and Smoothness

<li data-jstree='{"icon": "fa fa-file-o"}'> Taylor Expansions </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Special-Purpose Expansions </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Validity of Low-Rank Representations </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Checking Rank Estimates.html">Demo: Checking Rank Estimates</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Checking Rank Estimates.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Checking Rank Estimates.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Checking Rank Estimates.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Multipole and Local Expansions.html">Demo: Multipole and Local Expansions</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Multipole and Local Expansions.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Multipole and Local Expansions.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Multipole and Local Expansions.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Skeletonization using Proxies.html">Demo: Skeletonization using Proxies</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Skeletonization using Proxies.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Skeletonization using Proxies.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/03-rank-and-smoothness/Skeletonization using Proxies.ipynb">Download Jupyter notebook</a> </li> </ul> </li> </ul> -

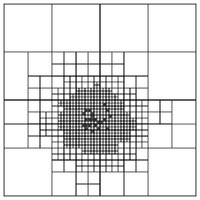

Near and Far: Separating out High-Rank Interactions

<li data-jstree='{"icon": "fa fa-file-o"}'> Ewald Summation </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Quadtrees and Octrees </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Tree Codes </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Fast Multipole Methods </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Solving the Linear System: Iterative and Direct Methods </li> </ul> -

Outlook: Building a Fast PDE Solver

<li data-jstree='{"icon": "fa fa-file-o"}'> Prototypical elliptic PDEs </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Fundamental Solutions </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Layer Potentials </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Integral Equations </li> </ul> -

Going Infinite: Integral Operators and Functional Analysis

<li data-jstree='{"icon": "fa fa-file-o"}'> Operators </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Boundedness </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Compactness </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Riesz and Fredholm </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Spectral Theory </li> <li data-jstree='{"icon": "fa fa-book"}'> <a href="https://www.springer.com/us/book/9781461495925">Kress: Linear Integral Equations (UIUC Ebook available)</a> </li> </ul> -

Singular Integrals and Potential Theory

<li data-jstree='{"icon": "fa fa-file-o"}'> Green's Formula </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Jump Conditions </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Exterior Domains </li> </ul> -

Boundary Value Problems

<li data-jstree='{"icon": "fa fa-file-o"}'> Green's Formula </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Jump Conditions </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Exterior Domains </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Uniqueness </li> </ul> -

Back from Infinity: Discretization

<li data-jstree='{"icon": "fa fa-file-o"}'> Nyström and Galerkin </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Unstructured and High Order: Meshes and Elements </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Polynomial Spaces and Mappings </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/09-discretization/2D Interpolation Nodes.html">Demo: 2D Interpolation Nodes</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/09-discretization/2D Interpolation Nodes.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/09-discretization/2D Interpolation Nodes.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/09-discretization/2D Interpolation Nodes.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/09-discretization/Choice of Nodes for Polynomial Interpolation.html">Demo: Choice of Nodes for Polynomial Interpolation</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/09-discretization/Choice of Nodes for Polynomial Interpolation.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/09-discretization/Choice of Nodes for Polynomial Interpolation.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/09-discretization/Choice of Nodes for Polynomial Interpolation.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/09-discretization/Vandermonde conditioning.html">Demo: Vandermonde conditioning</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/09-discretization/Vandermonde conditioning.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/09-discretization/Vandermonde conditioning.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/09-discretization/Vandermonde conditioning.ipynb">Download Jupyter notebook</a> </li> </ul> </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/09-discretization/Visualizing the 2D PKDO Basis.html">Demo: Visualizing the 2D PKDO Basis</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/09-discretization/Visualizing the 2D PKDO Basis.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/09-discretization/Visualizing the 2D PKDO Basis.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/09-discretization/Visualizing the 2D PKDO Basis.ipynb">Download Jupyter notebook</a> </li> </ul> </li> </ul> -

Computing Integrals: Approaches to Quadrature

<li data-jstree='{"icon": "fa fa-file-o"}'> Families of Quadrature Methods </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Quadrature for Fourier Modes </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Quadrature by Expansion (QBX) </li> <li data-jstree='{"icon": "fa fa-keyboard-o"}'> <a href="repocur:demos/upload/10-quadrature/Kussmaul-Martensen quadrature.html">Demo: Kussmaul-Martensen quadrature</a> <ul> <li data-jstree='{"icon": "fa fa-newspaper-o"}'> <a href="repocur:demos/upload/10-quadrature/Kussmaul-Martensen quadrature.html">View on the web</a> </li> <li data-jstree='{"icon": "fa fa-terminal"}'> <a href="repocur:demos/upload/10-quadrature/Kussmaul-Martensen quadrature.py">Download Python script</a> </li> <li data-jstree='{"icon": "fa fa-download"}'> <a href="repocur:demos/upload/10-quadrature/Kussmaul-Martensen quadrature.ipynb">Download Jupyter notebook</a> </li> </ul> </li> </ul> -

More PDEs

<li data-jstree='{"icon": "fa fa-file-o"}'> Helmholtz </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Maxwell's Equations </li> <li data-jstree='{"icon": "fa fa-file-o"}'> Boundary and Volume Potentials </li> </ul>

CAUTION!

These scribbled PDFs are an unedited reflection of what we wrote during class. They need to be viewed in the context of the class discussion that led to them. See the lecture videos for that.

If you would like actual, self-contained class notes, look in the outline above.

These scribbles are provided here to provide a record of our class discussion, to be used in perhaps the following ways:

- as a way to cross-check your own notes

- to look up a formula that you know was shown in a certain class

- to remind yourself of what exactly was covered on a given day

By continuing to read them, you acknowledge that these files are provided as supplementary material on an as-is basis.

- scribbles-2017-08-30.pdf

- scribbles-2017-09-01.pdf

- scribbles-2017-09-06.pdf

- scribbles-2017-09-08.pdf

- scribbles-2017-09-13.pdf

- scribbles-2017-09-15.pdf

- scribbles-2017-09-20.pdf

- scribbles-2017-09-22.pdf

- scribbles-2017-09-25.pdf

- scribbles-2017-09-29.pdf

- scribbles-2017-10-04.pdf

- scribbles-2017-10-06.pdf

- scribbles-2017-10-11.pdf

- scribbles-2017-10-13.pdf

- scribbles-2017-10-18.pdf

- scribbles-2017-10-20.pdf

- scribbles-2017-10-25.pdf

- scribbles-2017-10-27.pdf

- scribbles-2017-11-01.pdf

- scribbles-2017-11-03.pdf

- scribbles-2017-11-08.pdf

- scribbles-2017-11-10.pdf

- scribbles-2017-11-15.pdf

- scribbles-2017-11-17.pdf

Computing

We will be using Python with the libraries numpy, scipy and matplotlib for assignments. No other languages are permitted. Python has a very gentle learning curve, so you should feel at home even if you've never done any work in Python.

Virtual Machine Image

While you are free to install Python and Numpy on your own computer to do homework, the only supported way to do so is using the supplied virtual machine image.

Books and Papers

-

For randomized linear algebra: Finding structure with randomness: Probabilistic algorithms for constructing approximate matrix decompositions by Halko/Martinsson/Tropp

-

For FMMs:

-

A fast algorithm for particle simulations by Greengard and Rokhlin.

-

A Fast Adaptive Multipole Algorithm for Particle Simulations

-

-

For numerical linear algebra:

-

Golub and van Loan is the definitive reference, with an emphasis on reference

-

Trefethen and Bau is less comprehensive but more approachable

-

Mike Heath's sci comp book also contains almost everything you actually need to know about numerical LA

-

-

For the functional analysis basics we need as well as the integral equation material, the best match for the course is Kress's book on linear integral equations. The references in the notes are for the second edition, linked above. A third edition is also available.

These can be downloaded as an ebook through the UIUC library's subscription to SpringerLink.

Previous editions of this class

Related Classes Elsewhere

- Alex Barnett (Dartmouth)

- Shravan Veerapaneni (Michigan)

- Leslie Greengard (NYU)

- Gunnar Martinsson: UC Boulder Dartmouth

- Jianlin Xia (Purdue)

- Francesco Andriulli (ENS TELECOM Bretagne)

- Mark Tygert

Python Help

- Python tutorial

- Facts and myths about Python names and values

- Dive into Python 3

- Learn Python the hard way

- Project Euler (Lots of practice problems)

Numpy Help

- Introduction to Python for Science

- The SciPy lectures

- The Numpy MedKit by Stéfan van der Walt

- The Numpy User Guide by Travis Oliphant

- Numpy/Scipy documentation

- More in this reddit thread

- Spyder (a Python IDE, like Matlab) is installed in the virtual machine. (Applications Menu > Development > Spyder)

- An introduction to Numpy and SciPy

- 100 Numpy exercises